Not everything needs AI. At the service desk, I saw hesitation that analytics would never capture. The interface worked. But people still paused. This project explores how design — not automation — can restore confidence. Decision Logic vs. AI

Digital banking is fast.

But money is emotional.

During three years working at the ING service desk, I supported customers — many of them seniors — who were not struggling with intelligence, but with uncertainty.

High-risk actions such as making payments triggered hesitation, repeated verification, and visible anxiety.

The interface was not unusable.

It felt unsafe.

ING Senior Assist explores whether digital banking can feel as calm and supportive as being guided by a service desk representative — specifically for senior users who experience uncertainty in high-risk financial moments.

Should critical financial flows rely on adaptive AI personalization —

or on deterministic, predictable system behavior?

Rather than adding more intelligence, the design challenge became:

When should intelligence step back?

Working directly with customers revealed something analytics cannot show: hesitation.

Not confusion.

Not inability.

Hesitation.

Some users read every word before tapping.

Some verified amounts three or four times.

Some navigated confidently — until the moment of confirmation.

The interface was functioning correctly.

But many users still paused.

They weren’t looking for speed.

They were looking for reassurance.

In high-risk financial moments, they needed visible confirmation — that the amount was correct, the account was correct, and nothing would happen without their clear intent.

More than anything, they needed the steady guidance a service desk representative provides.

Over time, patterns emerged:

• Hesitant but compliant

• Anxious and verification-seeking

• Confident but easily derailed

This project is grounded in field exposure.

Over three years at the service desk, I supported real customers navigating real financial actions. Due to privacy regulations, no customer data was recorded or stored. Insights were derived from behavioral observation and direct conversation.

This was complemented by:

• Heuristic evaluation of existing payment flows

• Informal interviews about emotional experience

• Simulation experiments in Google Colab

The goal of the simulation was not to optimize prediction.

It was to evaluate predictability.

To evaluate whether AI-driven personalization would improve or undermine confidence, I simulated senior interaction profiles using anonymized and synthetic behavioral assumptions.

I compared deterministic decision logic with probabilistic ML adaptation.

I wasn’t testing how accurately the system could predict behavior.

I was testing whether adaptive behavior would make the interface feel less stable.

Because no real customer data could be used, these behavioral profiles were generated using ChatGPT and structured into simulation-ready variables. The synthetic data was then modeled in Google Colab to compare deterministic decision logic with probabilistic machine learning adaptation.

In financial contexts, stability is not technical uptime.

It is psychological consistency.

Small variations increase cognitive monitoring during high-risk actions.

When users must “check the system,” trust drops.

To make this difference visible, I mapped both approaches across three dimensions:

• Step order stability

• Confirmation consistency

• Visual emphasis variation

The goal was not optimization.

It was predictability.

Machine learning can optimize flows.

It can reduce friction.

It can personalize.

But it also introduces variation.

In a payment context, variation becomes uncertainty.

For digitally confident users, adaptive shifts may feel efficient.

For anxious users in high-risk moments, they feel unstable.

In regulated banking environments, explainability matters.

Predictability matters more than personalization.

The decision was deliberate:

• Critical payment steps use deterministic, human-designed decision logic

• AI is limited to supportive roles — voice interaction, guidance cues, contextual reassurance

AI assists.

It does not decide.

Many accessibility improvements benefit everyone.

ING Senior Assist explores a different approach: intentional segmentation.

The slower pacing, repeated confirmations, and persistent visual guidance are designed for users who value reassurance over speed.

For younger users prioritizing efficiency, this experience would feel slow.

Creating a dedicated flow allows both groups to receive an interface aligned with their expectations — without compromising either.

This is not simplification.

It is intentional pacing.

The interface is inspired by the physical service desk.

At a bank branch:

• The representative points to what comes next

• They wait

• They confirm before proceeding

• They do not act without explicit consent

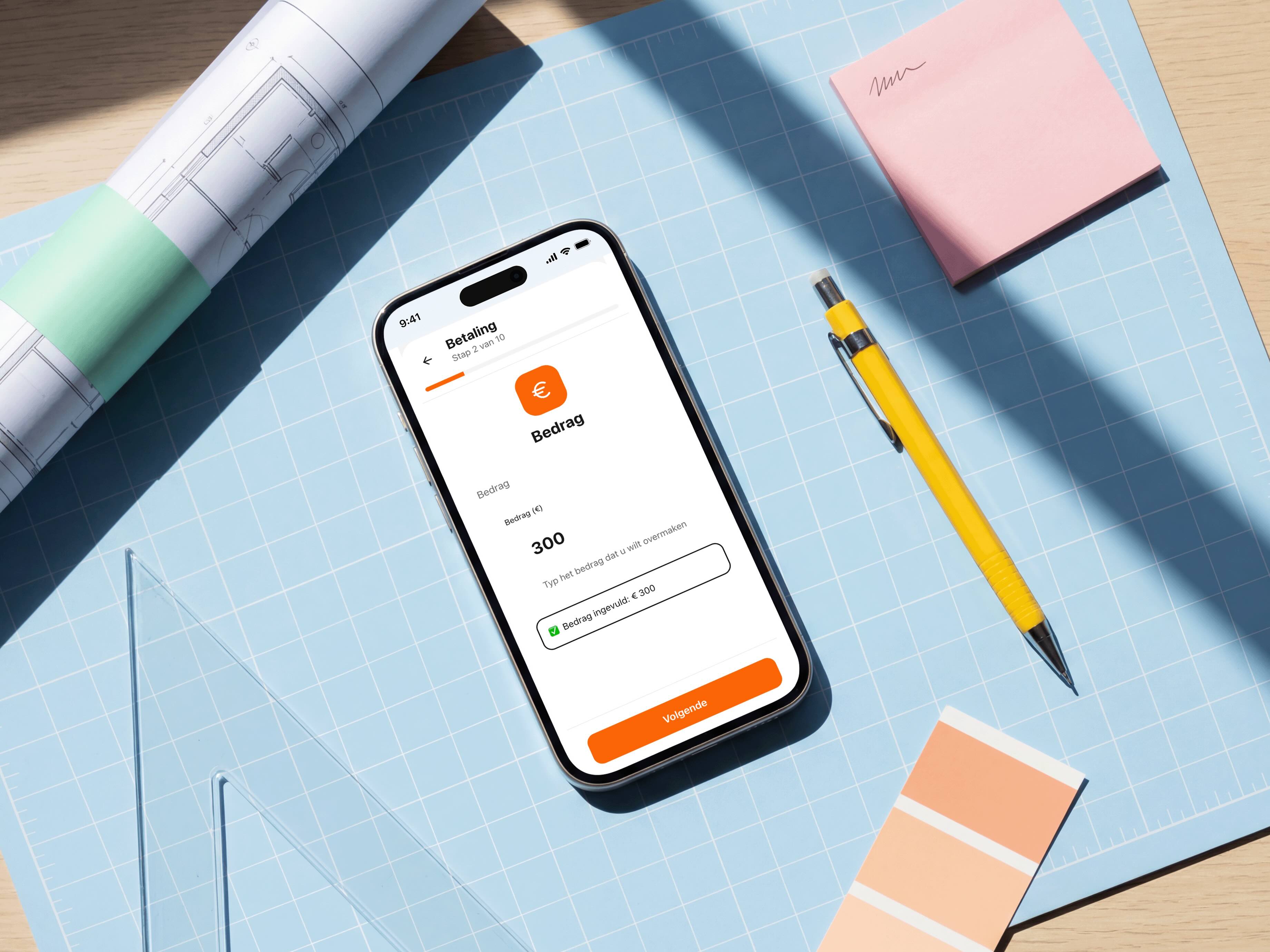

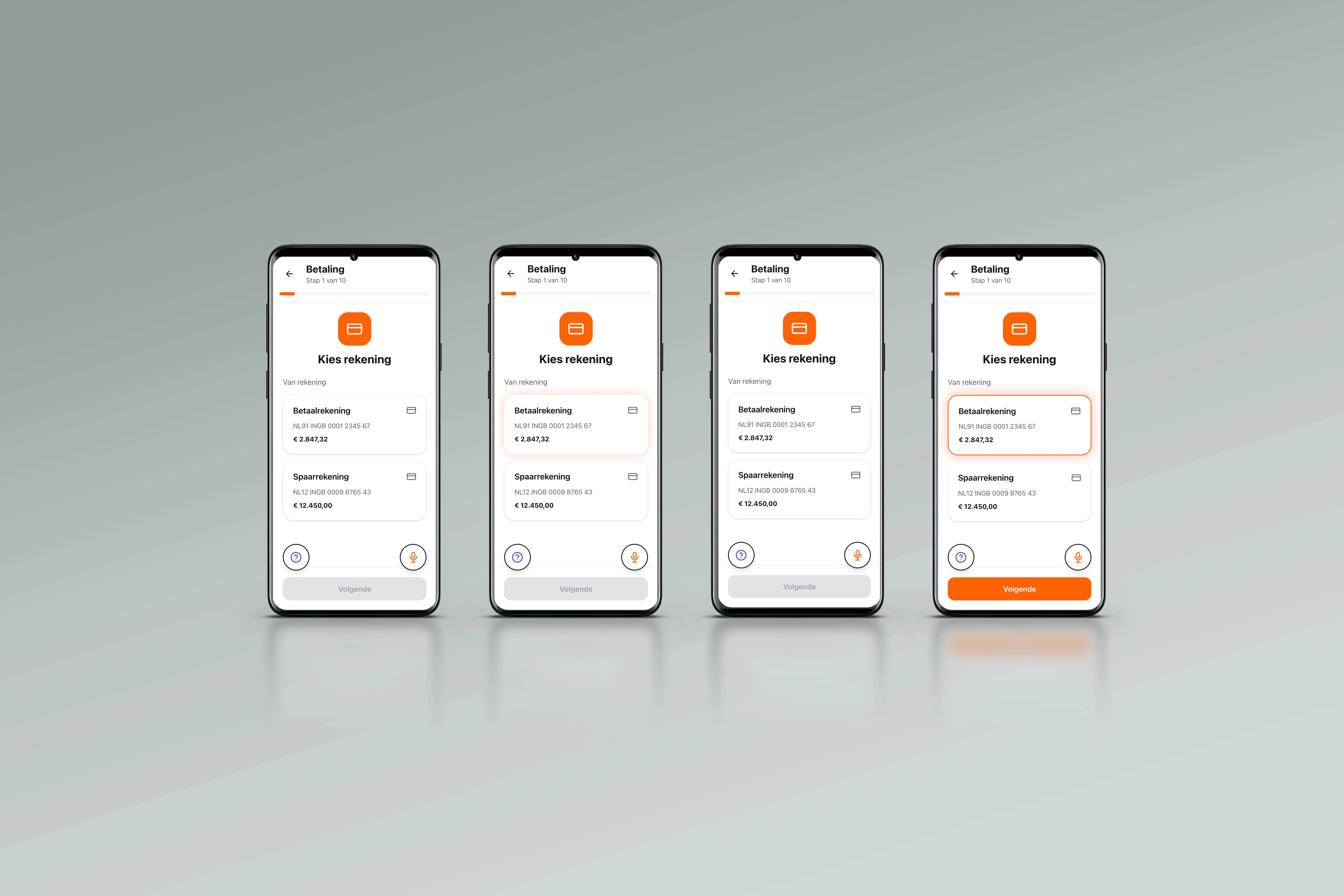

The digital flow mirrors this behavior:

• Buttons begin neutral

• After a short delay, the next step is softly highlighted

• When selected, the choice remains visibly confirmed

• Nothing progresses automatically

The system does not rush.

It reassures.

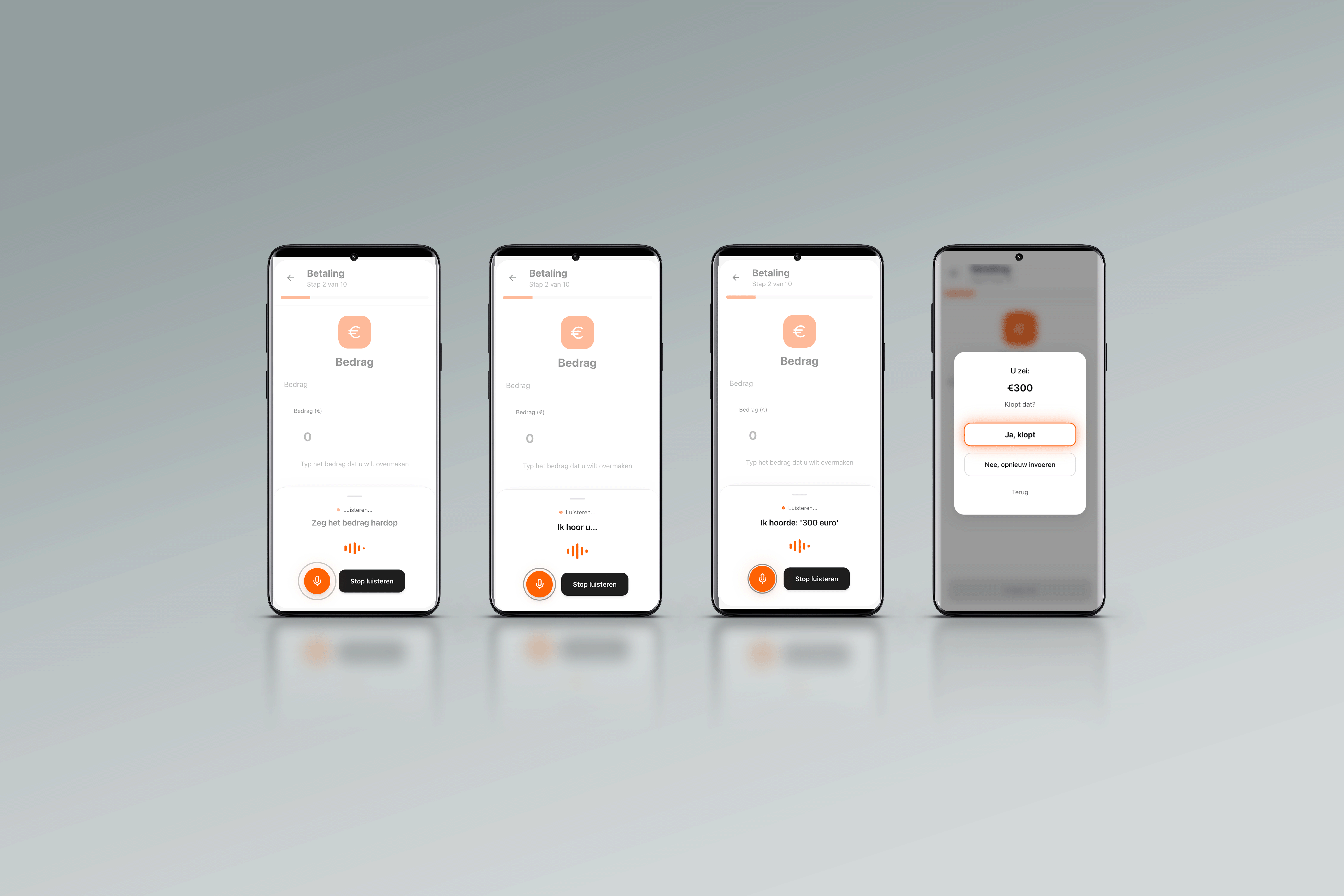

Rather than simplifying the interface further, the focus moved toward introducing a guiding presence — similar to the role of a service medewerker.

Voice prompts the next step. The interface listens, confirms what it heard, and waits for approval before moving forward. The glow shows where attention is needed.

"Voor wie is de betaling?" "Welk bedrag wilt u overmaken?" "U wilt €300 overmaken. Klopt dat?"

The flow itself stays rule-based and unchanged. AI interprets voice input. It does not make decisions.

Reassurance becomes part of the interface — not something you have to leave the app to find.

ING Senior Assist is built on a controlled interaction grammar.

Reassurance is not added through messaging.

It is embedded into state behaviour.

All interactive elements follow a consistent three-state structure:

Neutral

Guided

Confirmed

Neutral removes bias.

Guided introduces soft emphasis after a timed delay.

Confirmed creates persistent visual stability.

The guided state is expressed through a soft orange glow.

The glow is diffused and gradual — never abrupt, never flashing. It appears after a short delay to avoid perceived urgency.

It functions as directional guidance, similar to how a service desk representative subtly indicates the next step with a gesture. It does not command action. It suggests sequence.

When the user selects an element, the glow transitions into a persistent outline. The outline remains visible to anchor the decision in memory.

This sequence is universal. It does not change per screen.

The consistency of state transitions is more important than visual styling. Predictable state behavior reduces cognitive overhead and reinforces trust.

This sequence is universal. It does not change per screen. The same pattern — voice in, glow out, confirm — is designed to work across the entire app. Every flow. Every step. One consistent language of guidance. Because trust only works if it's consistent.

This experience is slower.

It requires explicit confirmation steps.

It does not auto-optimize for speed.

But in financial contexts, speed is not the primary KPI.

Trust is.

Limiting machine learning in critical flows reduces automation potential, but increases explainability and perceived safety.

That trade-off is intentional.

Many of the conversations I handled at the service desk were not about technical errors.

They were about reassurance.

“What do I need to press?”

“Is this the right button?”

“Can you check if I did it correctly?”

These interactions take time, even when nothing is wrong.

If a large bank handles approximately 200 reassurance-driven interactions per day, and each interaction costs around €5–€10 in staff time, that represents roughly €365,000–€730,000 per year in operational effort.

The hypothesis is simple:

If predictable system behavior increases psychological safety in high-risk payment flows, reassurance-driven interactions may decrease.

Even a modest 10% reduction could create measurable operational savings — while improving user confidence.

Trust is not only emotional.

It has operational consequences.

ING Senior Assist reframes digital banking as a confidence-building environment.

It demonstrates:

• Strategic restraint in AI deployment

• Translating field experience into system logic

• Designing interaction timing intentionally

• Prioritizing emotional safety in regulated contexts

This project is not about making banking smarter.

It is about making confidence visible.

Designing for aging is not about larger fonts alone.

It is about mental models formed in different technological eras.

Many older adults grew up with mechanical interfaces where controls were visible and fixed. Modern layered digital systems require navigation, abstraction, and adaptation.

When interfaces change silently, trust erodes.

Every generation eventually becomes unfamiliar with new technology.

Designing for predictability today is designing for everyone’s future self.

This prototype was built in Figma Make, with AI used as a thinking partner during iteration. ChatGPT helped test interaction logic and refine timing patterns, particularly around guidance and confirmation states.

All explorations were based on synthetic behavioral assumptions. No internal banking data or customer information was used.

AI assisted the work.

The decisions were mine.