Flippy turns difficult thoughts into gentler perspectives.What happens when a playful vibecoding experiment turns into a real AI product — and suddenly raises questions about privacy, transparency, and responsibility?

The idea did not begin as a product plan.

While exploring vibecoding and AI development tools, I came across a concept shared by a technical creator online: using AI to flip negative thoughts into a different perspective.

I liked the idea immediately.

Not as a product at first, but as something fun to try building myself — almost like a small experiment or game to see what I could create using vibecoding.

The concept itself is familiar to many people. Our most difficult moments are often shaped by the way we talk to ourselves. Negative self-talk can quickly spiral into overwhelming thoughts:

“I feel like I failed today.”

“Everything feels overwhelming.”

“I’m not good enough.”

The basic interaction was simple:

Write a thought → receive a different perspective.

At the beginning, the goal was simply to see if I could build this interaction using AI-assisted development tools.

But once the system started working and real text could be entered into the interface, the project became more serious than I initially expected.

Questions about privacy, responsibility, and the role of AI in emotional tools began to emerge.

What started as a vibecoding experiment gradually became an exploration of responsible AI design.

Flippy was built as a vibecoding experiment using Lovable.

I started by jumping in and prompting, trying ideas and observing how the system responded. There was no detailed plan at the beginning — the product gradually took shape through experimentation.

Some prompts worked immediately, while others produced unexpected results. A change that fixed one part of the system could affect something else, which meant features sometimes had to be rewritten or simplified. In several cases it took many iterations to remove behavior that I did not intend, and I went through a large number of Lovable credits while refining the system.

The version of Lovable used during development was an earlier version of the tool. Styling and layout changes often depended on shared tokens or global settings, which made visual adjustments slower and sometimes difficult to control.

Newer versions of Lovable allow visual elements to be adjusted more directly and can ask clarifying questions before implementing changes. This makes iteration faster while helping translate design intentions into more accurate system behavior.

Building Flippy also meant learning how Lovable interprets instructions. Clear prompts usually worked well, while vague prompts often produced inconsistent results.

When prompts or generated code did not behave as expected, I used tools such as ChatGPT or Claude to analyze the problem and generate more structured, code-based prompts. These instructions proved more reliable than describing changes only in words and helped stabilize the system.

Different AI tools therefore played complementary roles during development. Lovable handled the interface generation and application structure, while tools such as ChatGPT or Claude helped analyze code, refine prompts, and debug unexpected behavior.

Over time the development process formed a loop between design intention and AI execution:

Designer / Idea

↓

AI Prompting

↓

AI Tools (Lovable, ChatGPT, Claude)

↓

Working Product (Flippy)

Because everything happened in a live environment, ideas could be tested immediately without sketches or waiting for implementation.

Working this way created something of a love–hate relationship with the process. It could be unpredictable and occasionally frustrating, but it was also fast, engaging, and surprisingly effective for turning ideas into working interactions.

Progress was rarely linear. Some changes improved one part of the system while affecting another in unexpected ways. Over time the product gradually improved through many small adjustments.

Rather than replacing design decisions, AI tools became part of the development workflow — translating design intentions into working product behavior.

The interaction flow is intentionally simple.

Users:

• write a thought that feels difficult

• Flippy generates a reframed perspective

• reflections can optionally be saved in a reflection journey

Over time, users can revisit previous reflections and observe small shifts in how they interpret situations.

The experience is designed to feel calm and supportive rather than analytical or performance-driven.

Instead of measuring emotional performance, Flippy focuses on small perspective shifts.

Early versions displayed statistics such as:

“50% of reflections improved your mood.”

While technically interesting, this framing felt too analytical for a reflective experience.

Instead, these insights were translated into supportive language:

“Some of your reflections helped shift your perspective this week.”

This small change made the interaction feel significantly more human.

Design Insight

Quantifying emotional progress can unintentionally turn reflection into a performance task.

Replacing metrics with supportive language keeps the experience focused on reflection rather than measurement.

Flippy is represented by a small character that acts as the emotional center of the interface.

The goal was not to create a conversational AI personality, but to introduce a gentle visual presence that makes the interface feel approachable.

Design elements include:

• a prominent character on the main screen

• subtle breathing animations

• soft visual styling

• customizable companion options

These choices create warmth without encouraging emotional dependence on the system.

Flippy is designed to feel supportive, but clearly remains a digital tool.

Reflection happens in quiet moments. Flippy is designed to fit naturally into them.

.png)

Reflection often happens during quiet moments throughout the day.

Because of this, the interface was designed primarily for mobile use.

Special attention was given to:

• onboarding character introduction

• readable text inputs

• safe mobile layouts

• ensuring important buttons remain visible on smaller screens

The system behind Flippy is intentionally simple.

User writes a thought

↓

Prompt structure

↓

Language model

↓

Generated reframe

↓

Flippy interface

A thought is written, shaped into a prompt, processed by the model, and returned as a short reflection.

What mattered was not the structure itself, but how the system behaves.

The prompt was designed to keep Flippy in a very narrow role. It does not analyze the user or try to understand them deeply. Instead, it offers small shifts in perspective — nothing more.

This required restraint.

The system avoids sounding certain, avoids giving advice, and avoids interpreting what someone is feeling. Responses stay short, soft, and slightly open, so they can be taken or left.

For example, when a user writes "I hate myself," the system does not reflect that language back, does not offer solutions, and does not escalate into crisis support. It responds with a gentler lens on the moment, without assuming more than it knows.

Not every input is clear. Some thoughts are fragmented, repetitive, or difficult to interpret. In those moments, Flippy does not try to force meaning. It stays simple, and gently redirects.

One edge case required particular care. When input signals serious distress, Flippy does not attempt to reframe at all. Instead, the system detects the signal and surfaces a crisis support modal — localised by region, calm in tone, and direct about what Flippy cannot do. The response is contained. The support is real.

The goal was not capability. It was care — for what the system does, and equally for what it refuses to do.

The architecture remains intentionally minimal.

Flippy processes reflections only to generate a supportive response.By default, reflections remain temporary unless the user chooses to save them.

User Input

↓

AI Processing

↓

Generated Response

↓

Displayed in Interface

↓

(Optional) Save locally

If a reflection is saved, it remains stored locally on the user’s device rather than on external servers.

This approach reduces unnecessary data exposure and keeps the system easier to understand.

People write real thoughts into Flippy. Even short reflections can contain emotional or sensitive information. This made it important to design the product — not just the AI — in a way that protects the user.

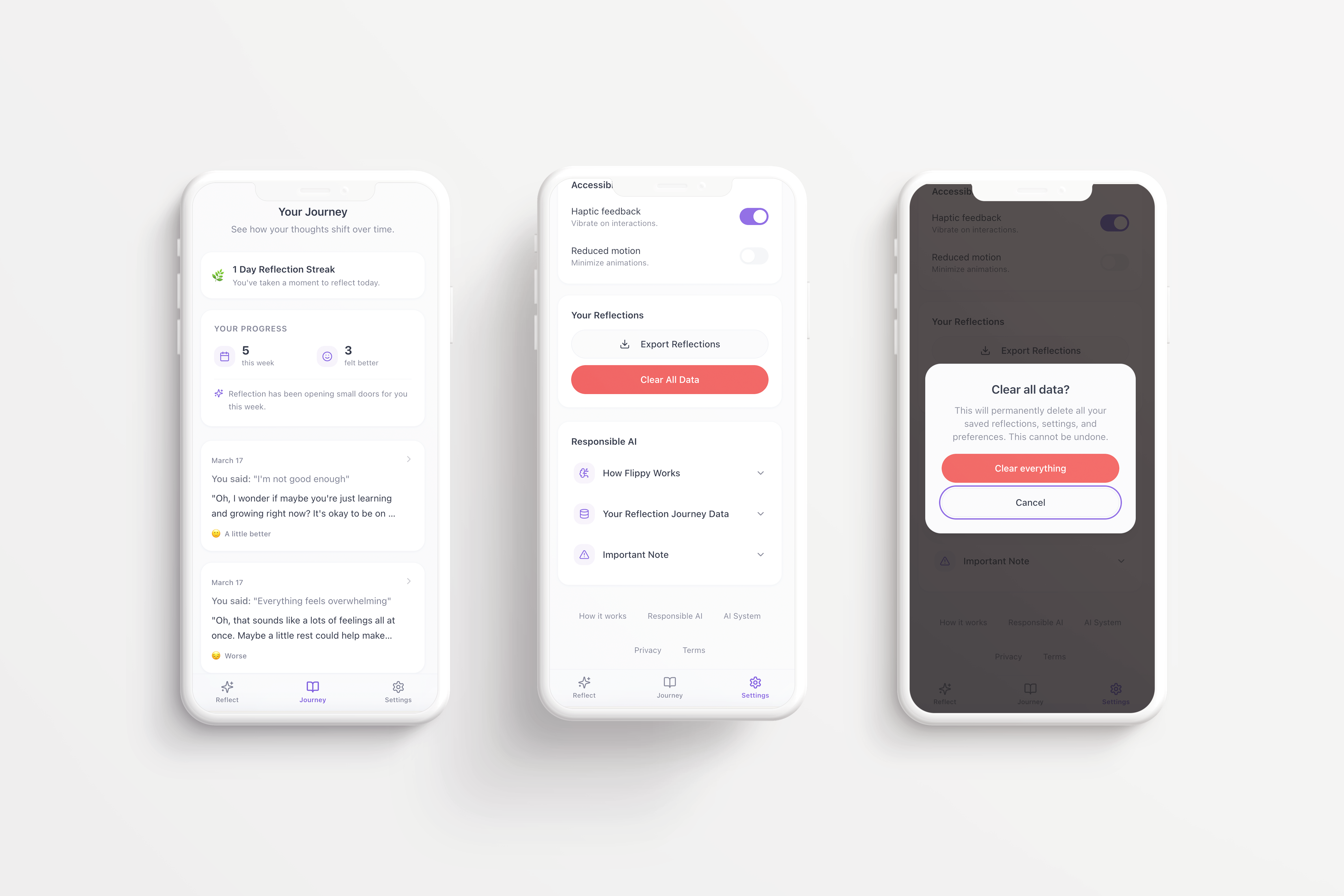

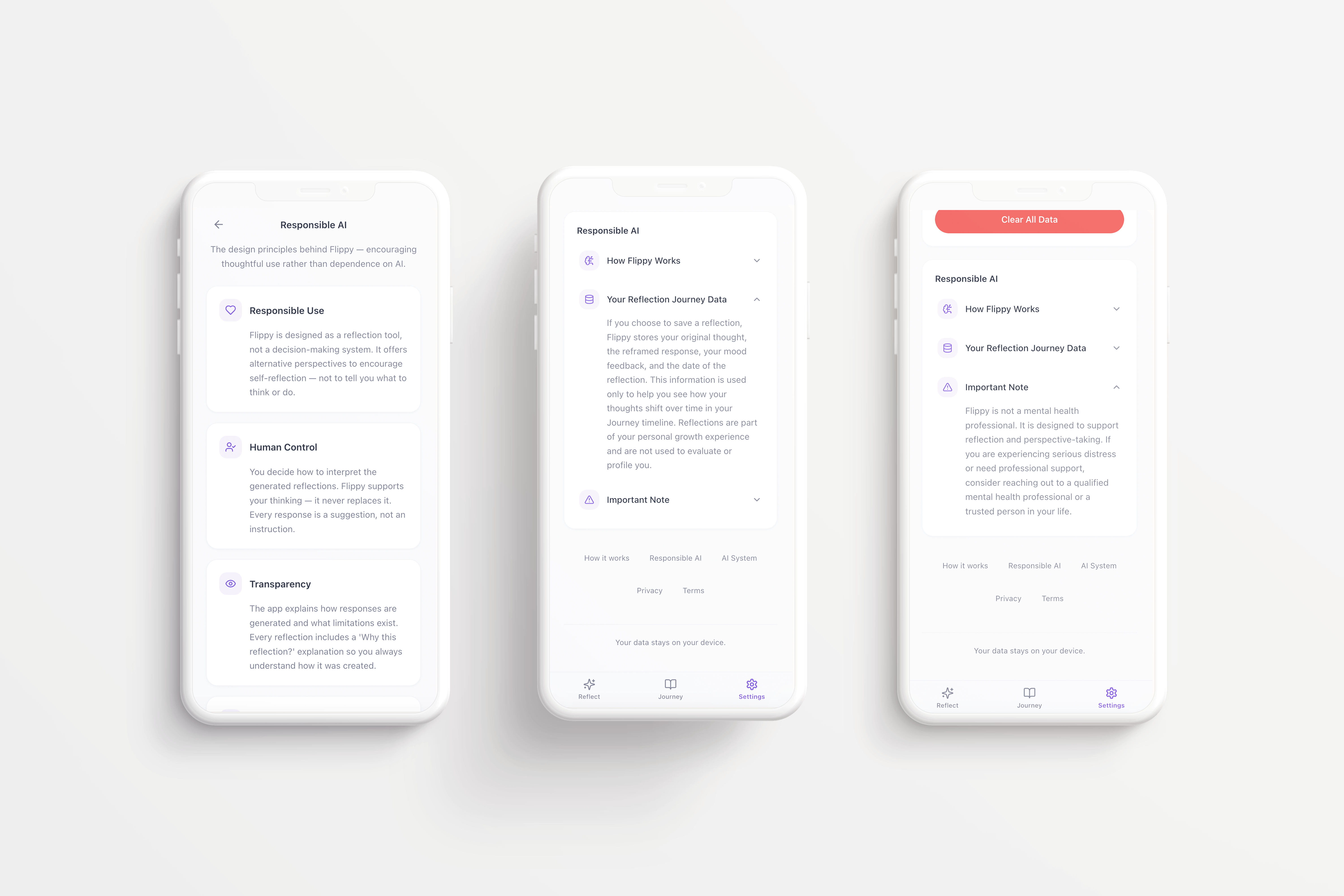

Transparency became part of the interface itself. The app includes a Responsible AI section explaining how the system works, how reflections are handled, and what the tool cannot do. Flippy is a simple reflection aid. It does not provide therapy, professional advice, or crisis support.

Users remain in control of their data at all times. Reflections are stored locally on the device, never on external servers. Entries can be exported or permanently deleted whenever the user chooses.

What started as a vibecoding experiment gradually became an exploration of something more considered — how even a small, lightweight AI product carries responsibility the moment real people start using it.

AI systems should not only be built responsibly — they should also be monitored over time.

Even when a system works as intended, changes in language models, infrastructure, or prompts can influence how responses are generated. Because of this, Flippy would require ongoing review to ensure the system continues to behave within its intended boundaries.

Monitoring would focus on checking that responses remain supportive, non-judgmental, and aligned with the purpose of the tool.

Regular review also helps ensure that the system continues to respect the principles it was designed around: safety, transparency, and minimal data handling.

Responsible AI design therefore does not end when a product is released. It continues through observation, evaluation, and careful updates as the system evolves.

Flippy started as a playful vibecoding experiment — a way to explore how quickly something could be built with AI-assisted development tools. But once the system allowed people to enter real thoughts, the project began to feel different.

The question was no longer only how to make the interaction work. It became how to make it behave responsibly.

That shift brought new design priorities into focus: privacy, transparency, clear system limits, and user control. What began as a small technical experiment became a more serious exploration of how AI products can stay simple without becoming careless.

Flippy showed me that building with AI is not only about speed or experimentation. It is also about understanding the responsibility that comes with designing systems that interact with people’s inner lives.

As the concept matured, Flippy evolved from a single reframing interaction into a lightweight reflection product designed with app distribution in mind.

The experience expanded into a small reflection flow, including a journaling feature that allows users to save responses and revisit them later. This created a gentler, more continuous experience — one that helps users notice how their perspective can shift over time.

The interface follows patterns familiar from wellness products, using calm visuals and a consistent design system to support a focused, low-pressure interaction. Transparency and control remain part of the product itself, so users understand how the system works and stay in charge of what is saved.

Flippy remains intentionally lightweight: a small tool designed to help people pause, reframe a thought, and move forward with a slightly different perspective.